Industrial Agentic Engineering with NeqSim: AI Agents for Engineering Task Solving in Industry

Copyright © 2026 Equinor ASA and the Norwegian University of Science and Technology (NTNU). All rights reserved.

This work is the intellectual property of Equinor ASA and NTNU. No part of this publication may be reproduced, stored in a retrieval system, or transmitted, in any form or by any means, electronic, mechanical, photocopying, recording, or otherwise, without the prior written permission of Equinor ASA and NTNU.

Published by Equinor ASA and NTNU

The NeqSim library is open-source software released under the Apache License 2.0. All code examples in this book are available at https://github.com/equinor/neqsim and may be freely used and modified under the terms of that license.

Typeset using NeqSim PaperLab

To the engineers who solve real problems every day — and to the open-source community that makes the tools to help them do it better.

Preface

This book was born from a simple observation: the oil and gas industry spends billions of dollars annually on engineering calculations that are fundamentally well-understood — thermodynamic property estimation, phase equilibrium, process simulation, pipeline hydraulics, equipment sizing, and standards compliance — yet the workflow for performing these calculations has barely changed in thirty years. Engineers navigate complex graphical user interfaces, manually transfer numbers between spreadsheets and simulators, copy results into reports, and start from scratch for every new project.

Meanwhile, a revolution in artificial intelligence has produced large language models (LLMs) that can understand natural language, reason about complex problems, write code, and interpret results. But these models have a critical weakness: they hallucinate physics. Ask an LLM for the density of methane at 200 bara and you may get a plausible-sounding but wrong number. Ask it to solve a cubic equation of state and it will produce confident nonsense.

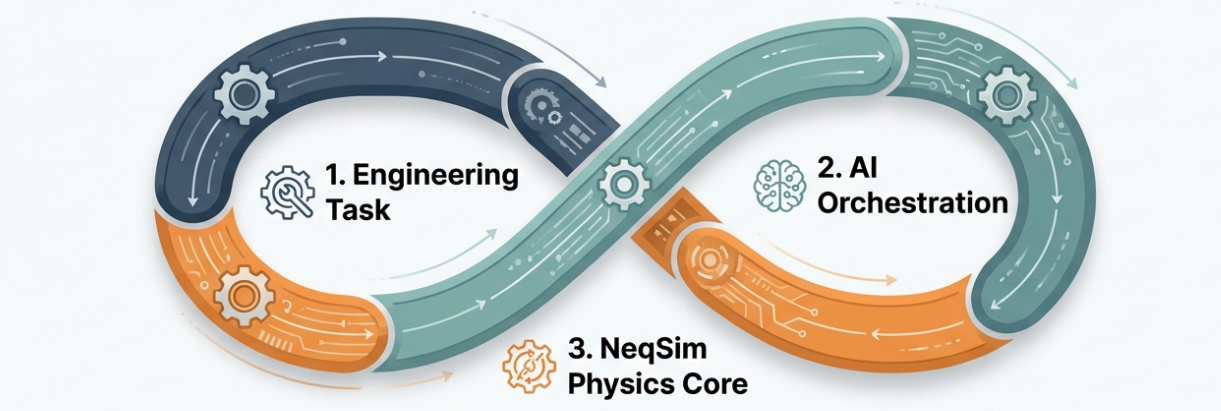

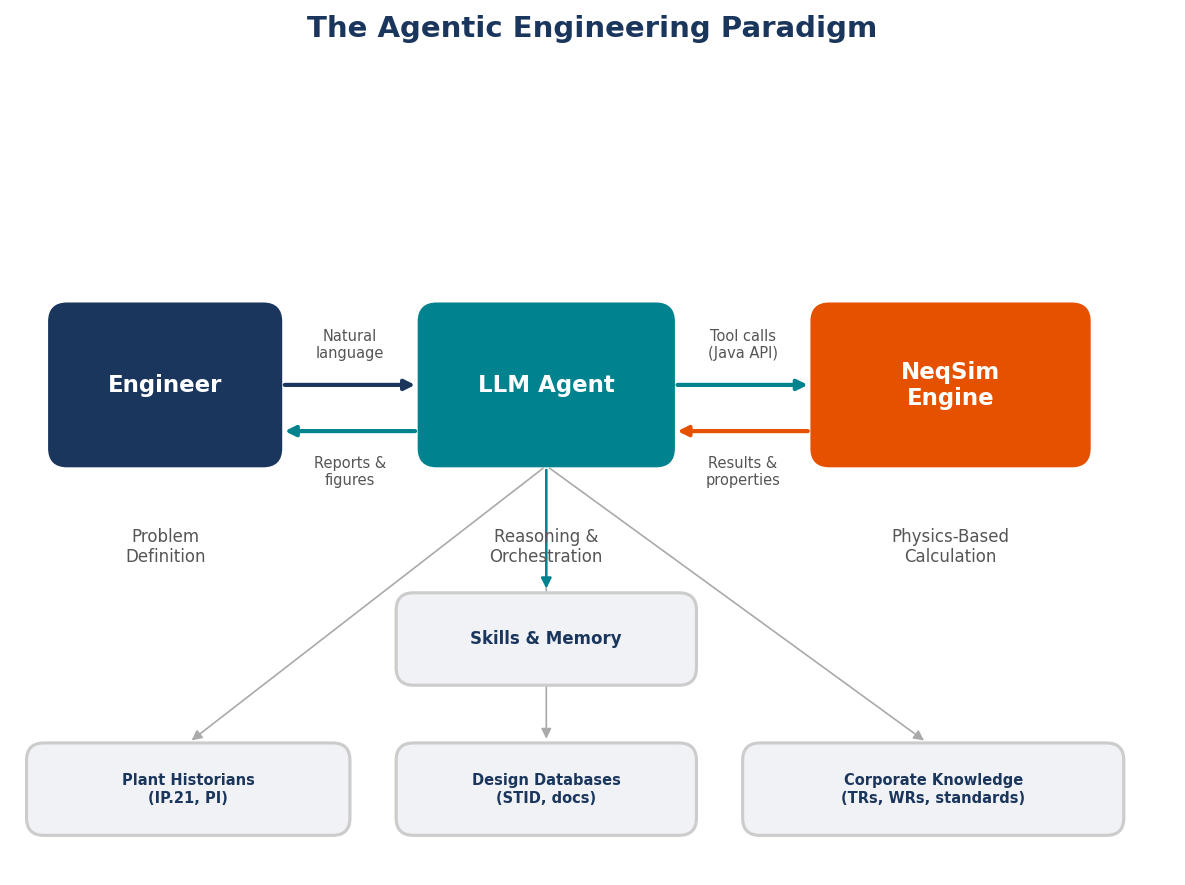

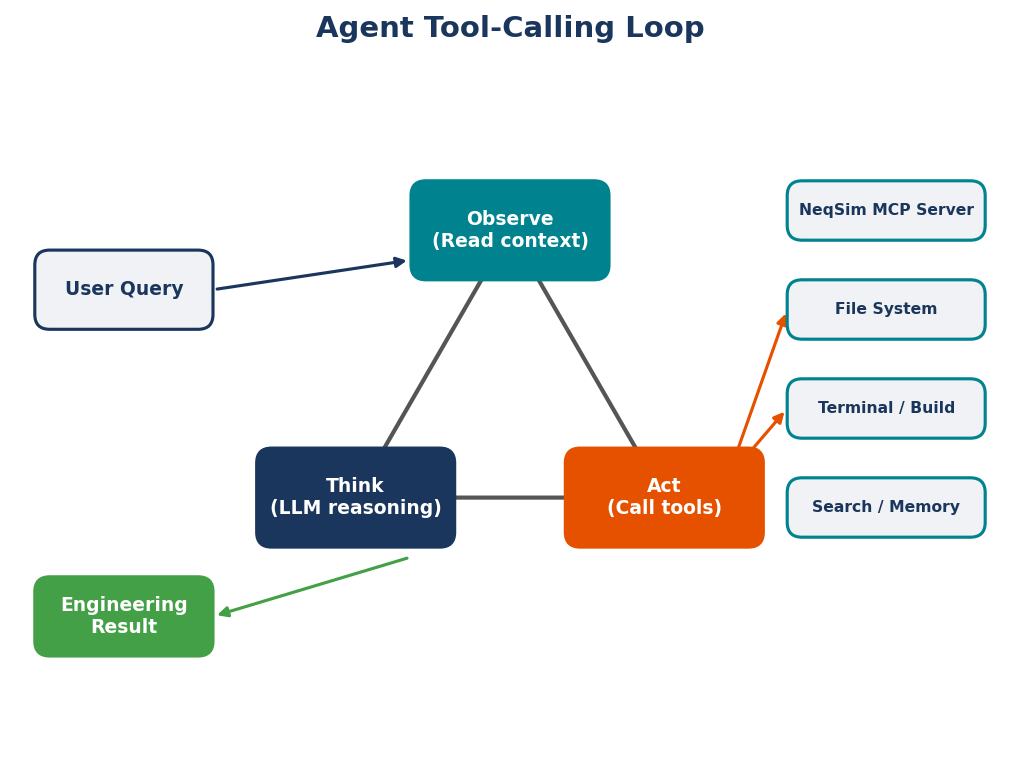

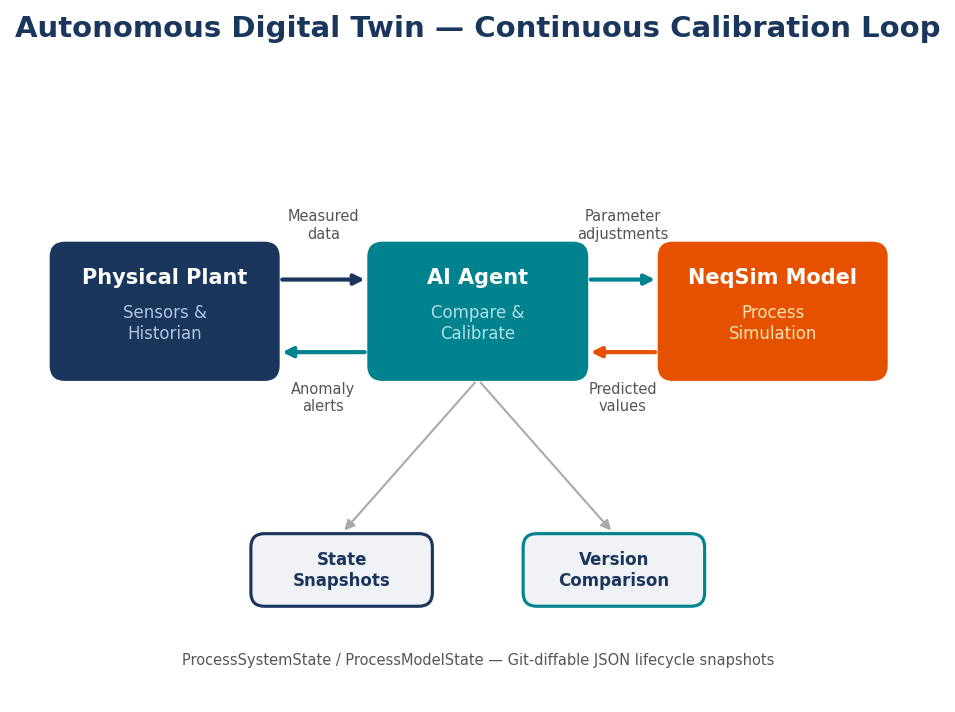

Agentic engineering solves this problem by combining what AI does well — understanding intent, selecting methods, writing code, interpreting results — with what physics engines do well: exact numerical computation. The AI agent writes the simulation code; the physics engine runs it; the agent interprets the results and generates reports. Neither component works well alone. Together, they form something genuinely new: a system where an engineer can describe a problem in plain language and receive a validated, documented, standards-compliant engineering analysis in return.

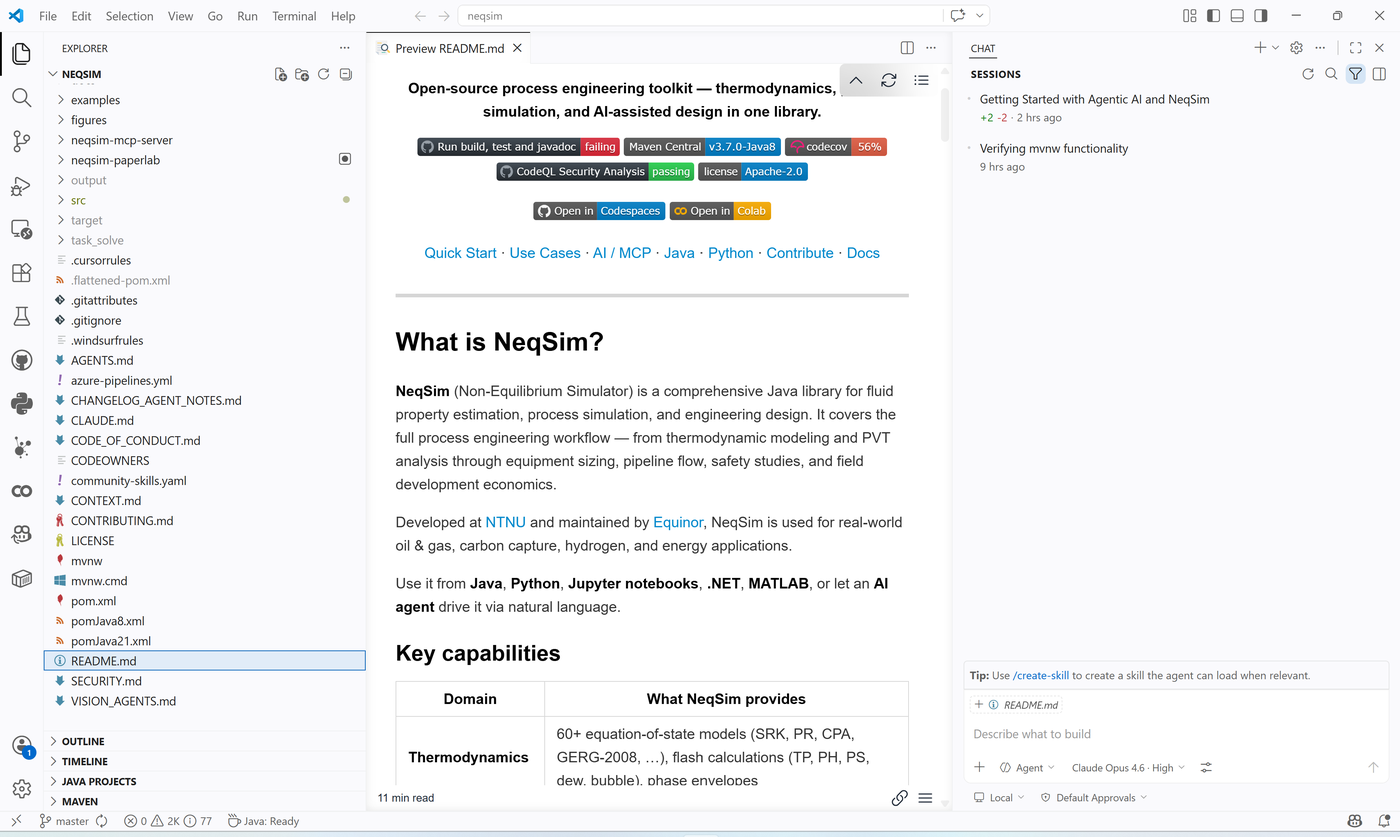

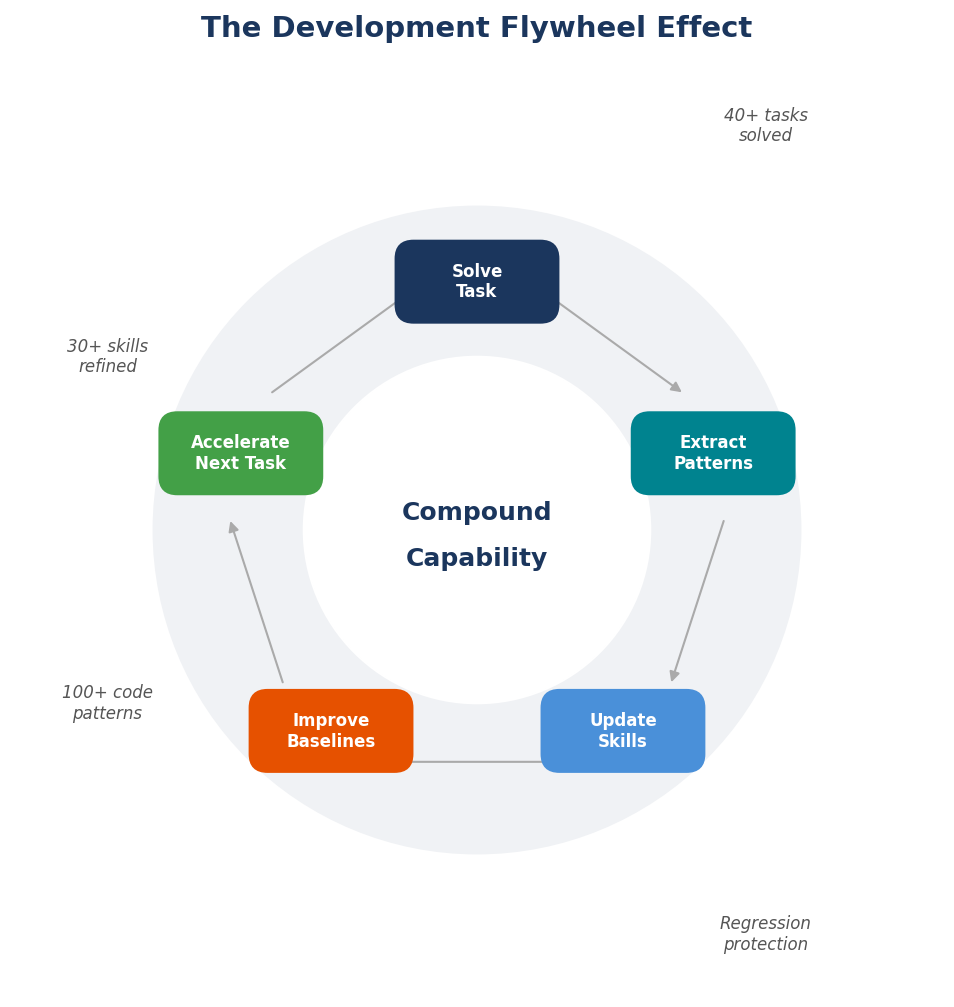

This book documents how we built such a system using NeqSim, an open-source Java library for thermodynamics and process simulation, integrated with AI agents running in Visual Studio Code via GitHub Copilot. The system has been used to solve over 40 real engineering tasks on the Norwegian Continental Shelf, from CNG tank filling temperature estimation to full Class A field development concept studies for floating LNG facilities.

Who This Book Is For

This book is written for three audiences:

- Process engineers and reservoir engineers who want to understand how AI agents can accelerate their daily work without sacrificing physical accuracy or standards compliance

- Software engineers and AI practitioners who want to understand how to build reliable tool-using AI systems for scientific and engineering domains

- Engineering managers and technical leaders who need to evaluate whether agentic engineering is ready for their organization and what governance is required

How This Book Is Organized

Part I (Chapters 1–4) lays the foundation: getting started with the system, why agentic engineering matters, how the NeqSim physics engine works, and the principles of AI agent architecture.

Part II (Chapters 5–8) describes the platform: the multi-agent system, the skills library, the task-solving workflow, and the MCP server that enables governed deployment of engineering calculations.

Part III (Chapters 9–11) presents worked examples at increasing complexity: from single property lookups to full process simulations to multi-discipline flow assurance studies.

Part IV (Chapters 12–13) covers industrial application: real case studies from the Norwegian Continental Shelf and a look at where agentic engineering is heading.

Reproducibility

Every code example in this book can be reproduced using the open-source NeqSim library. Install with:

pip install neqsim

All agent interactions described in the worked examples use GitHub Copilot in VS Code with the NeqSim repository open as the workspace. The full source code, agent definitions, skill files, and example notebooks are available at https://github.com/equinor/neqsim.

Acknowledgements

This work would not have been possible without the contributions of the NeqSim open-source community, the thermodynamics research group at NTNU, and the technology teams at Equinor who provided real-world engineering problems to solve. Special thanks to the teams who tested early versions of the agentic workflow on production platform models and field development studies.

Even Solbraa

Trondheim, April 2026

Part I: Foundations

1 Getting Started

Learning Objectives

After reading this chapter, the reader will be able to:

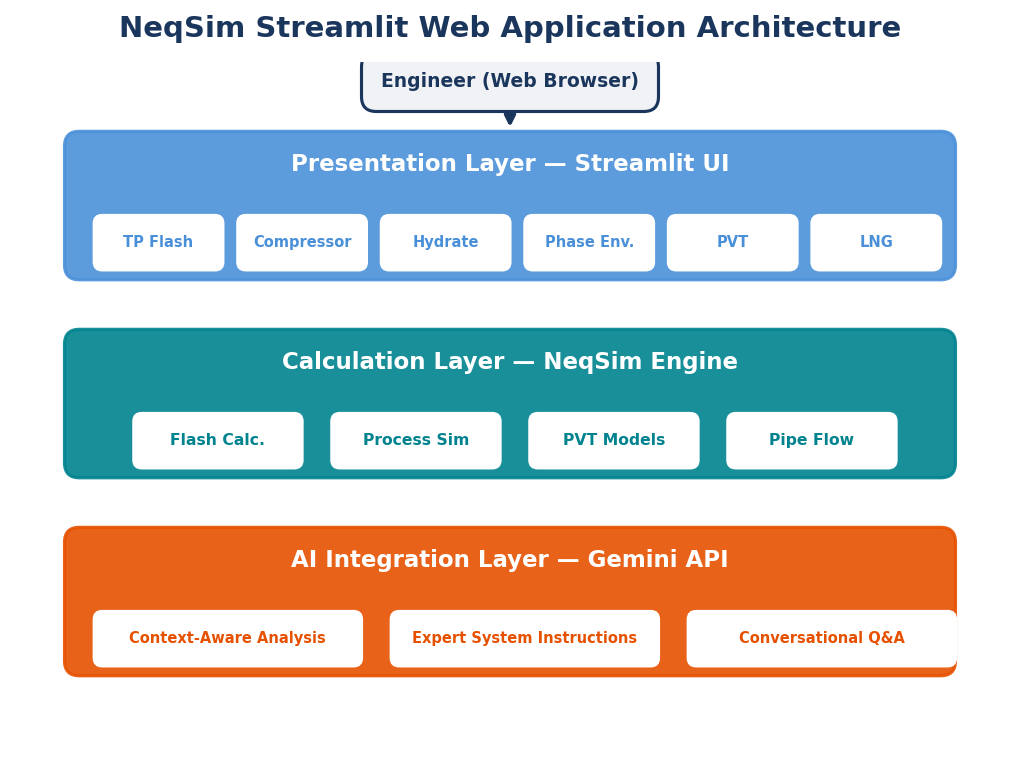

- Understand the four-layer architecture: Agents, Skills, Physics Engine, and Data

- Set up a complete agentic engineering environment — either locally or in the cloud

- Install the required software tools: Git, Python, Java, VS Code, and an AI coding assistant

- Clone the NeqSim repository and verify a working build

- Run a first AI-assisted engineering calculation using natural language

Quick Start — Three Commands

If you want to skip the explanations and get running immediately, here is all you need:

git clone https://github.com/equinor/neqsim.git && cd neqsim

pip install -e devtools/ # one-time: registers the `neqsim` command

neqsim onboard # interactive setup (Java, Maven, build, Python, agents)

Or skip local setup entirely: Open in GitHub Codespaces at https://github.com/equinor/neqsim — everything is pre-installed in the browser.

Once set up, explore and contribute:

neqsim try # interactive playground — experiment with NeqSim instantly

neqsim contribute # guided wizard — picks the right contribution path for you

neqsim doctor # quick diagnostic if something isn't working

The rest of this chapter explains each step in detail.

---

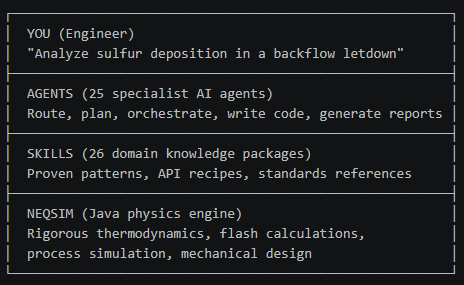

1.1 The Four-Layer Stack

Agentic engineering with NeqSim is built on four layers. Each layer has a distinct role, and understanding them is the key to understanding the entire system.

Layer 1: Agents — The Reasoning Layer

At the top of the stack are AI agents — large language models (LLMs) such as Claude, GPT, or Gemini running inside a development environment like VS Code with GitHub Copilot. Agents are the layer you interact with directly. You describe an engineering problem in natural language, and the agent reasons about what needs to be done: which calculations to run, which standards apply, what validation is required, and how to present the results.

Agents can read files, write code, run simulations, create figures, and generate reports — all autonomously. They are powerful reasoners but unreliable calculators. An agent can correctly decide that the density of methane at 200 bara requires the SRK equation of state with volume translation, but it cannot solve the cubic equation itself. That is why the agent delegates computation to the physics engine below.

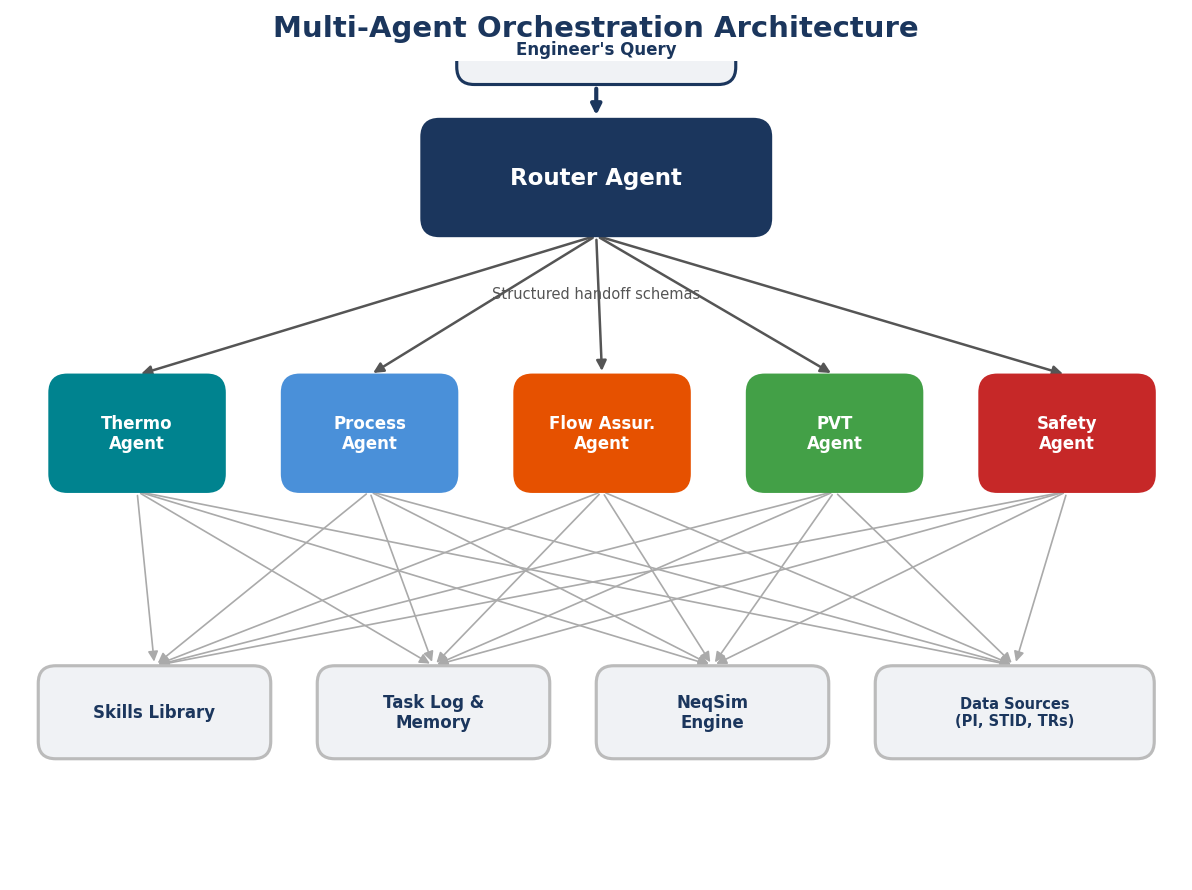

NeqSim includes 25+ specialist agents — for process simulation, flow assurance, field development, PVT analysis, mechanical design, safety studies, and more. Chapter 5 covers the multi-agent system in detail.

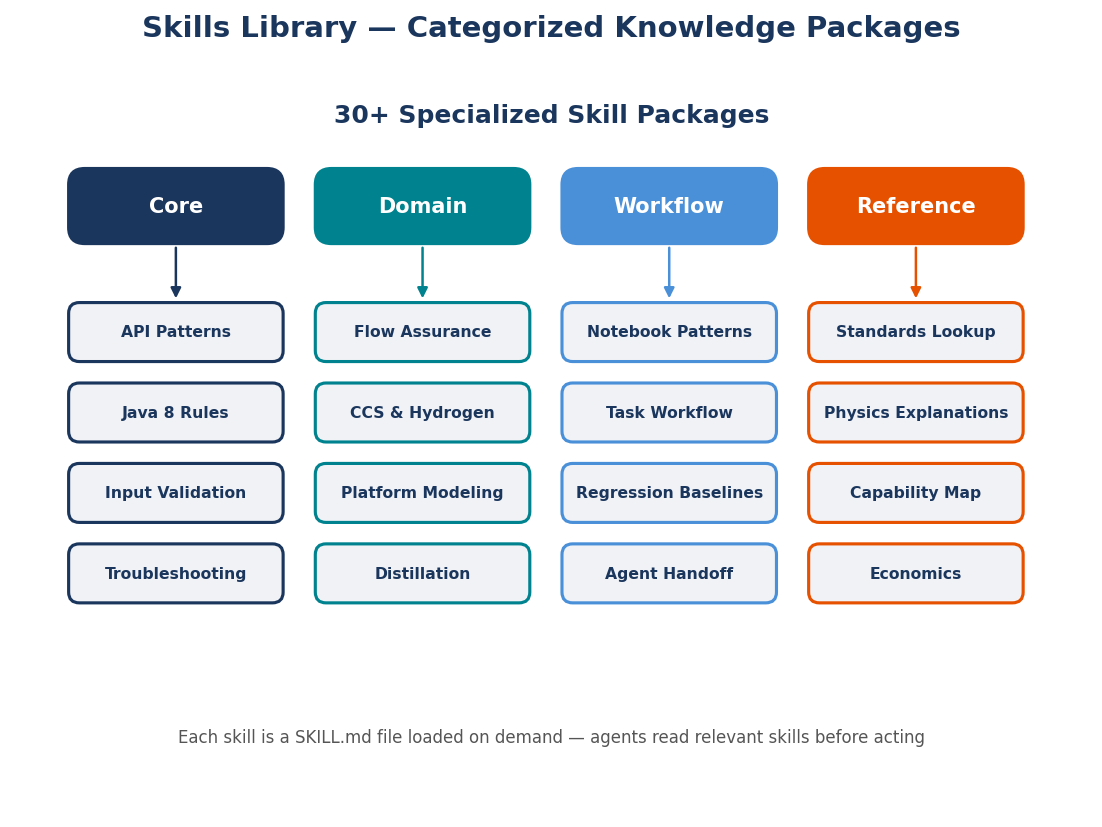

Layer 2: Skills — The Knowledge Layer

Between the agents and the physics engine sits the skills layer — a library of markdown files containing tested engineering knowledge. Each skill is a SKILL.md file with code patterns, design rules, standards references, and common mistakes for a specific topic. When an agent receives a task, it loads relevant skills before generating any code — using verified patterns instead of guessing.

For example, the neqsim-flow-assurance skill contains hydrate prediction patterns, pipeline pressure drop code, and corrosion rate correlations, all tested against the actual NeqSim API. The neqsim-platform-modeling skill contains patterns for building multi-stage separation trains with recycles and compressor anti-surge control, derived from 15+ production platform models on the Norwegian Continental Shelf.

Skills come in three tiers:

| Tier | Location | Visibility | Example |

|---|---|---|---|

| Core | .github/skills/ in the NeqSim repo |

All users, automatically | neqsim-flow-assurance |

| Community | Your GitHub repo, listed in community-skills.yaml |

Anyone who installs it | neqsim-ncs-fiscal-terms |

| Local | Your workspace or private repo | Only you | company-separator-sizing |

The current core includes 26+ skills covering thermodynamics, process equipment, PVT, standards, mechanical design, CCS and hydrogen, field economics, dynamic simulation, and platform modeling. Contributing a skill is the easiest way to make the agentic system smarter — no Java required. Chapter 6 covers the skills system in full detail.

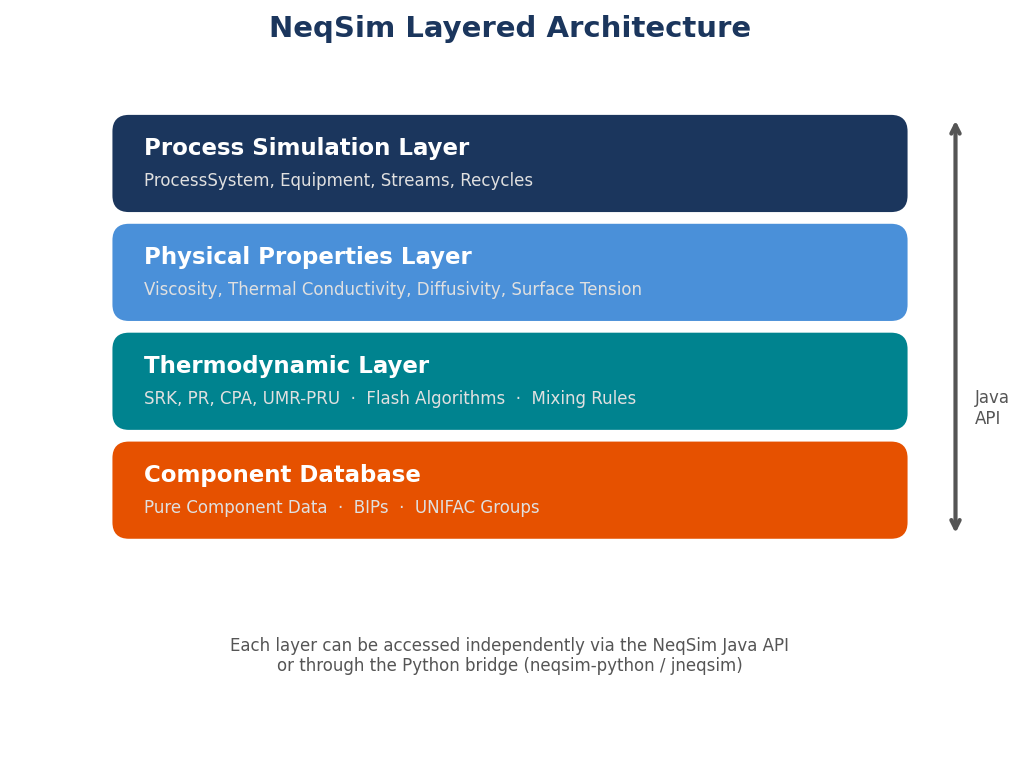

Layer 3: Physics Engine — The Computation Layer

The physics engine is NeqSim itself — a comprehensive Java library for thermodynamic modeling, process simulation, and engineering design. When an agent needs to calculate a physical property, run a flash calculation, or simulate a piece of process equipment, it writes code that calls the NeqSim API. The engine performs the rigorous numerical computation and returns exact results.

NeqSim (Non-Equilibrium Simulator) was originally developed at the Department of Energy and Process Engineering at NTNU and is maintained by Equinor. It handles fluids such as natural gas, oil, carbon dioxide, hydrogen, ammonia, refrigerants, water, and industrial chemicals. Key capabilities include:

- Thermodynamics — 60+ equation-of-state models (SRK, PR, CPA, UMR-PRU, GERG-2008), mixing rules, and transport property correlations

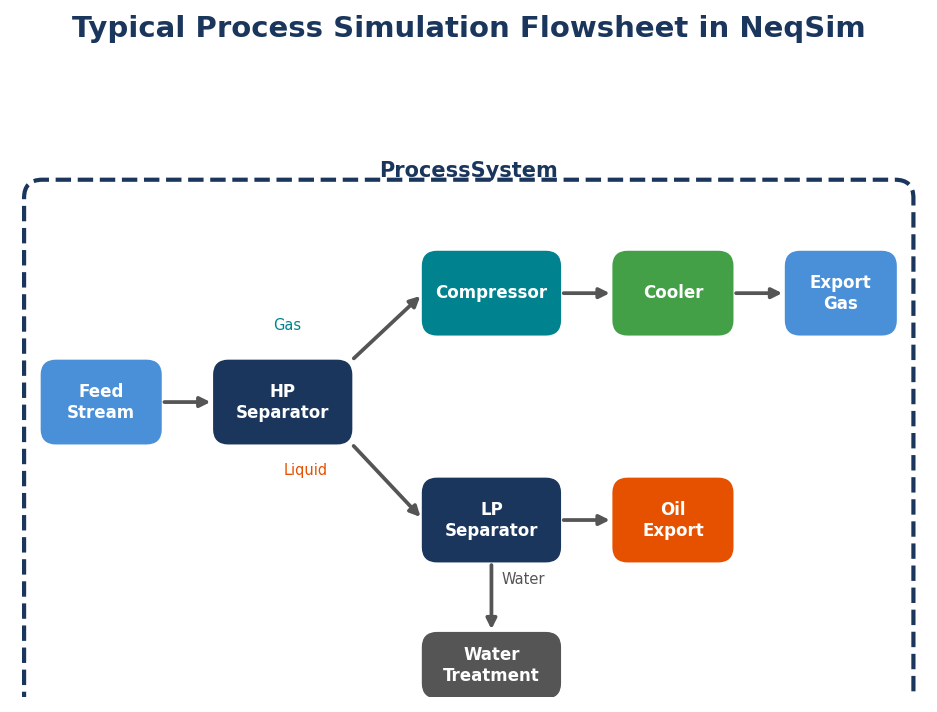

- Process simulation — 33+ equipment types including separators, compressors, heat exchangers, distillation columns, reactors, and complete process trains with recycles

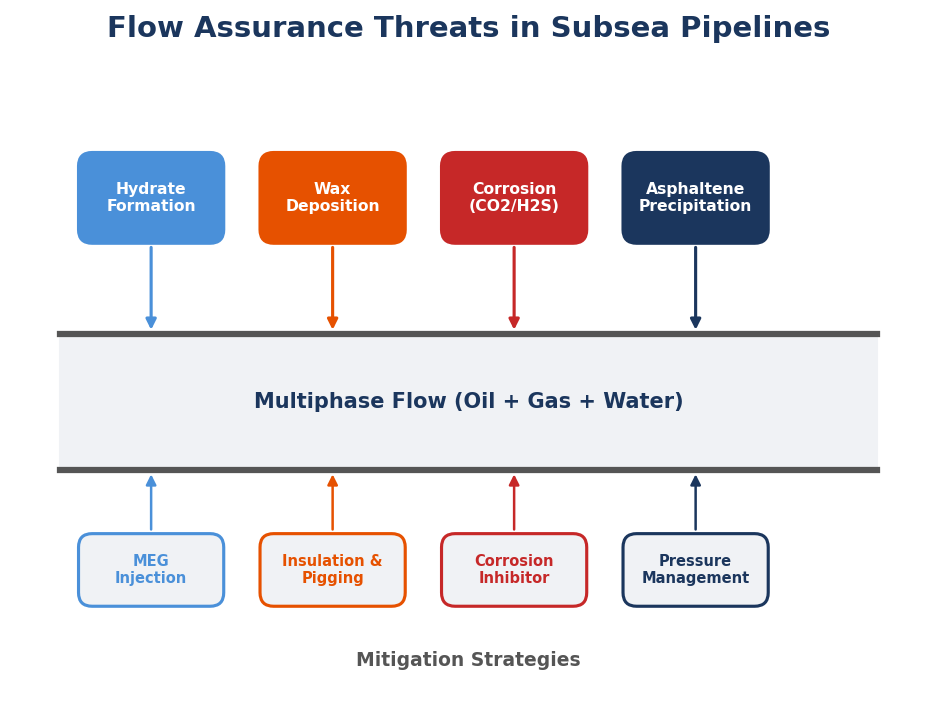

- PVT and flow assurance — phase envelopes, hydrate prediction, wax, asphaltene, corrosion, and multiphase pipe flow

- Field development — production forecasting, concept selection, NPV/IRR economics, and uncertainty analysis

Chapter 3 provides a thorough introduction to the physics engine.

Layer 4: Data — The Foundation

At the base of the stack is data — the inputs that feed the physics engine and the outputs that agents interpret. Data includes:

- Fluid compositions — lab analyses, PVT reports, well test data

- Operating conditions — pressures, temperatures, flow rates from design basis documents or plant historians (OSIsoft PI, Aspen IP.21)

- Design parameters — equipment specifications, piping data, material properties

- Standards and regulations — API, NORSOK, DNV, ISO, ASME requirements encoded in skills and CSV databases

- Benchmarks and validation — NIST reference data, published experimental results, vendor performance curves

Data can come from files in the repository, from documents the agent reads (PDFs, Excel, Word), or from live connections to plant data historians. The agent reads and interprets data; the physics engine consumes it for calculation.

How the Layers Work Together

A typical interaction flows through all four layers. You ask an agent (Layer 1) to calculate the hydrate formation temperature for a gas pipeline. The agent loads the flow assurance skill (Layer 2), which contains the correct NeqSim code pattern for hydrate prediction. The agent writes code that calls the NeqSim thermodynamic engine (Layer 3) with the gas composition and pipeline conditions from the design basis (Layer 4). The engine returns a precise temperature; the agent formats the result, compares it to the standard requirement, and reports whether the pipeline is safe to operate.

No single layer works well alone. Agents without a physics engine hallucinate numbers. A physics engine without skills requires expert users. Skills without agents are static documentation. Data without any of these is just files on a disk. Together, the four layers form an integrated system for engineering task solving.

There are two paths to getting started:

| Path | Best for | What you need |

|---|---|---|

| GitHub Codespaces (cloud) | Trying it out, no local install | A GitHub account and a web browser |

| Local installation | Regular use, full performance | A computer with Windows, macOS, or Linux |

We describe both paths below.

1.2 Path A: GitHub Codespaces (Zero Install)

The fastest way to get started — with nothing to install — is GitHub Codespaces. This gives you a complete VS Code environment running in the cloud, with Java, Python, Maven, and NeqSim pre-configured.

Step 1. Go to https://github.com/equinor/neqsim

Step 2. Click the green "Code" button, then select the "Codespaces" tab

Step 3. Click "Create codespace on master"

GitHub will provision a virtual machine, install all dependencies, and open VS Code in your browser. After a few minutes, you will see the full NeqSim workspace — ready to use.

Step 4. Open the terminal (` Ctrl+ ``) and verify the setup:

neqsim doctor

This command checks that Java, Python, Maven, and the NeqSim build are all working correctly.

Step 5. Try your first calculation:

neqsim try

This opens an interactive playground where you can experiment with NeqSim immediately.

> Tip: Codespaces is ideal for trying things out, following along with examples in this book, or contributing small fixes. For regular daily use with large simulations, a local installation gives better performance.

1.3 Path B: Local Installation (Step by Step)

For a permanent setup on your own machine, follow these steps. The entire process takes about 15–20 minutes.

Step 1: Install Git

Git is a version control system that tracks changes to files over time and enables collaboration. The NeqSim source code is hosted on GitHub at https://github.com/equinor/neqsim. When you "clone" the repository, Git downloads a complete copy of the codebase to your computer.

Download Git from https://git-scm.com/downloads and install it for your platform:

- Windows: Download from

https://git-scm.com/download/winand run the installer. Accept the defaults. - macOS: Open Terminal and type

git --version. If Git is not installed, macOS will prompt you to install it. Alternatively, download the installer fromhttps://git-scm.com/download/mac. - Linux: Run

sudo apt install git(Ubuntu/Debian) orsudo dnf install git(Fedora).

Verify the installation by opening a new terminal and typing git --version.

You will also need a free GitHub account — sign up at https://github.com.

> New to Git? The free Pro Git book at https://git-scm.com/book covers everything from basic commits to branching workflows. For a quick interactive tutorial, try https://learngitbranching.js.org.

Step 2: Install Python

Python is the scripting language you will use to interact with NeqSim. The neqsim Python package provides a bridge to the Java engine via JPype, so you can call NeqSim's thermodynamic models from Python code and Jupyter notebooks.

- Go to

https://www.python.org/downloads/ - Download Python 3.12 or later

- Run the installer:

- Windows: Before clicking anything, check the box "Add python.exe to PATH" — this is critical. Then click "Install Now"

- macOS: Open the

.pkgfile and follow the prompts - Linux:

sudo apt install python3 python3-pip python3-venv(Ubuntu/Debian) orsudo dnf install python3 python3-pip(Fedora)

- Verify by opening a new terminal:

python --version

> Troubleshooting (Windows): If python --version says "not recognized," Python was not added to PATH. Uninstall via Settings → Apps, re-run the installer, and check the PATH box this time.

Step 3: Install Java

Java is the language the NeqSim physics engine is written in. You do not need to write Java — the Python interface handles the translation — but a Java Development Kit (JDK) must be installed so the engine can run.

- Go to

https://adoptium.net(Eclipse Temurin — a free, open-source JDK) - Select JDK 21 (LTS) and download the installer for your operating system

- Run the installer:

- Windows: Ensure "Set JAVA_HOME variable" and "Add to PATH" are both checked

- macOS: Open the

.pkgfile and follow the prompts - Linux:

sudo apt install temurin-21-jdk(Ubuntu — you may need to add the Adoptium repository first)

- Verify by opening a new terminal:

java -version

> Alternative JDKs: Amazon Corretto, Microsoft Build of OpenJDK, or Oracle JDK all work. Any version 17 or 21 is compatible.

> Troubleshooting (Windows): If java -version says "not recognized," re-run the installer and enable the JAVA_HOME and PATH options.

Step 4: Install VS Code and AI Assistant

VS Code is a free code editor from Microsoft. It is the recommended environment because it integrates tightly with GitHub Copilot, supporting AI agents that can read your files, run commands, and execute code — all within the editor.

- Download from

https://code.visualstudio.comand install - Open VS Code and install these extensions (

Ctrl+Shift+X):

| Extension | Purpose |

|---|---|

| GitHub Copilot | AI coding assistant and agent mode |

| GitHub Copilot Chat | Chat panel for agent interaction |

| Python | Python language support |

| Jupyter | Notebook support |

| Extension Pack for Java | Java development support |

GitHub Copilot is an AI coding assistant that runs inside VS Code. In its "agent mode," it can autonomously plan and execute multi-step engineering tasks: reading files, writing code, running simulations, creating figures, and generating reports. Copilot is a gateway to multiple LLMs — you can choose which model powers the agent:

| Model | Provider | Strengths |

|---|---|---|

| Claude Opus 4 | Anthropic | Excellent at long, multi-step engineering tasks |

| GPT-4o / o3 | OpenAI | Broad knowledge, fast responses, deep reasoning |

| Gemini 2.5 Pro | Strong mathematical reasoning |

The examples in this book were developed primarily with Claude and GPT models, but the workflows are model-agnostic — you can switch models at any time. Model versions change frequently; check GitHub Copilot for the latest available options.

You will need: A GitHub Copilot subscription — free tiers are available for students, open-source contributors, and individual developers.

Alternatives: Cursor, Windsurf, Claude Code (terminal-based), and OpenAI Codex all work with NeqSim. This book uses VS Code with Copilot, but the concepts transfer directly.

> Confidential work: For proprietary data, run a local model with Ollama (https://ollama.com) or LM Studio (https://lmstudio.ai) — nothing leaves your machine. Good options include Gemma 4 27B, Qwen 3 32B, and Llama 4 Scout. See Chapter 4 for details.

Step 5: Clone the Repository and Onboard

Open a terminal (in VS Code or your system terminal) and run:

git clone https://github.com/equinor/neqsim.git

cd neqsim

This downloads the entire NeqSim codebase. Next, install the developer tools and run the onboarding wizard:

pip install -e devtools/

neqsim onboard

The neqsim onboard command walks you through the remaining setup: it checks Java, builds the NeqSim JAR file (the compiled physics engine), installs the Python package, and verifies everything works together.

> Maven: The build tool that compiles NeqSim's Java code. You do not need to install it — the repository includes a Maven wrapper (mvnw on Linux/macOS, mvnw.cmd on Windows) that automatically downloads and installs the correct Maven version the first time you run it.

If anything goes wrong, run the diagnostic tool:

neqsim doctor

Step 6: Open in VS Code and Verify

If you cloned from a system terminal, open the project in VS Code:

code .

The repository structure:

neqsim/

├── src/ # Java source code (physics engine)

│ ├── main/java/neqsim/ # Main library

│ └── test/java/neqsim/ # Tests

├── examples/notebooks/ # 30+ Jupyter notebook examples

├── docs/ # Documentation (350+ pages)

├── devtools/ # Developer tools and CLI

├── .github/

│ ├── agents/ # AI agent definitions

│ └── skills/ # Domain knowledge packages

├── AGENTS.md # Agent instruction file

├── mvnw.cmd # Maven wrapper (Windows)

└── pom.xml # Build configuration

Verify everything works by running your first calculation:

neqsim try

Or open the Copilot Chat panel (Ctrl+Shift+I) and type:

Calculate the density of methane at 100 bar and 25°C

The agent will write NeqSim code, execute it, and return the result — a density value calculated from the SRK equation of state.

1.4 Your First Agentic Engineering Session

Once your environment is set up (either via Codespaces or local installation), let us walk through a more substantial example.

Open the Copilot Chat panel in VS Code and type:

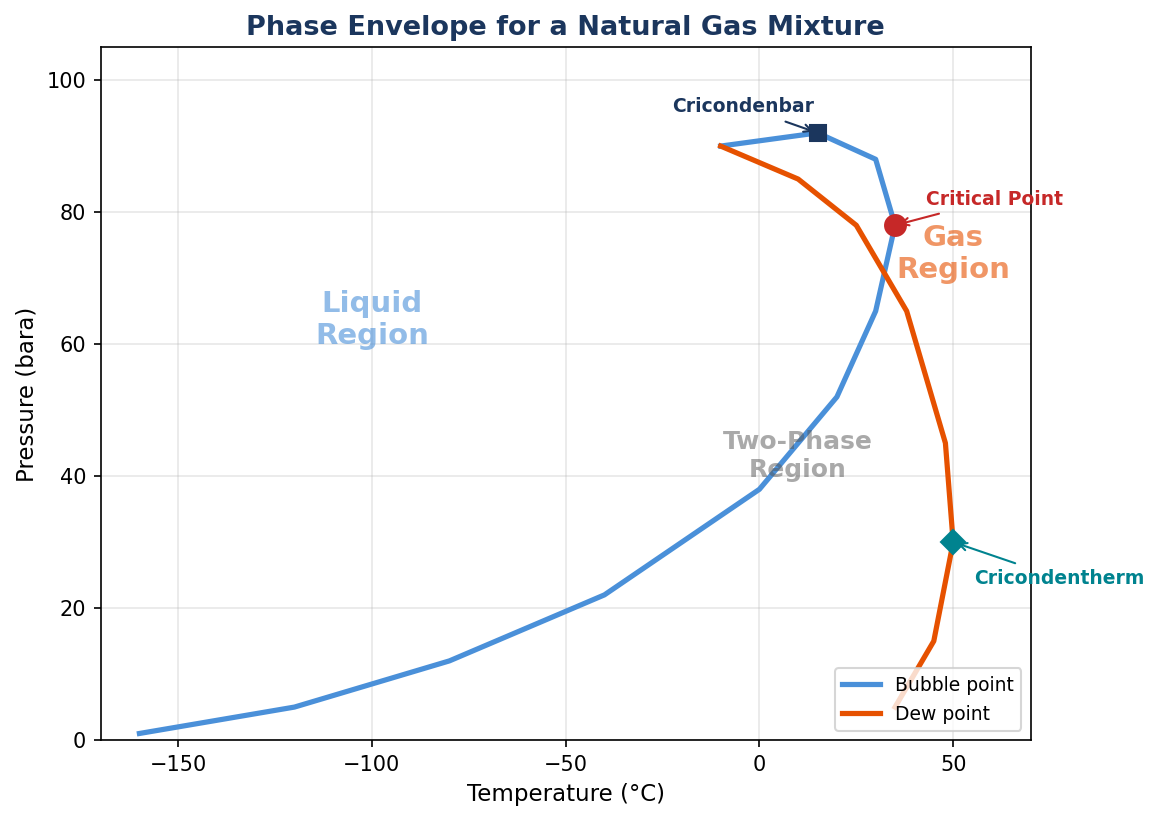

Create a Jupyter notebook that calculates the phase envelope for a natural gas

with 85% methane, 10% ethane, and 5% propane, using the SRK equation of state.

Plot the result with matplotlib.

The agent will:

- Load the

neqsim-api-patternsskill (Layer 2) for the correct code pattern - Create a notebook and write Python code calling the NeqSim engine (Layer 3)

- Run the flash calculations with the gas composition you provided (Layer 4)

- Generate a matplotlib plot of the phase envelope

- Save the result — all in about 30–60 seconds

This is agentic engineering: you describe what you want in plain English, and all four layers work together to deliver validated results.

1.5 The Task-Solving Workflow

For more substantial engineering problems, NeqSim provides a structured task-solving workflow. Instead of ad-hoc chat interactions, you can invoke a dedicated task-solving agent:

@solve.task hydrate formation temperature for wet gas at 100 bara

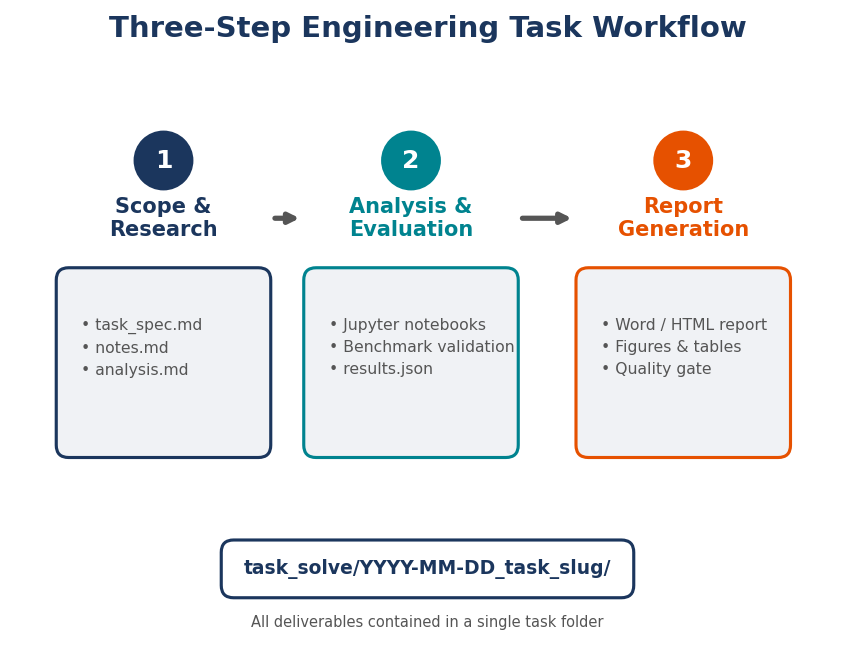

This triggers a three-step workflow:

- Scope and Research — The agent creates a task folder, identifies applicable standards, and writes a task specification

- Analysis and Evaluation — The agent creates Jupyter notebooks with NeqSim simulations, validates results against benchmarks, and runs uncertainty analysis

- Report — The agent generates a professional Word and HTML report with figures, tables, and conclusions

All outputs are organized in a structured task folder under task_solve/, making results reproducible and auditable. This workflow is covered in detail in Chapter 7.

1.6 What's Next

With your environment working, here are the key topics covered in later chapters:

The multi-agent system (Chapter 5) — NeqSim includes 25+ specialist agents for different engineering domains. A router agent analyzes your request and delegates to the right specialist, or composes multi-agent pipelines for cross-discipline tasks.

The skills library (Chapter 6) — Skills are the knowledge layer that makes agents reliable. Contributing a skill — a markdown file with tested code patterns and engineering rules — is the easiest way to improve the system. No Java required. Run neqsim new-skill "my-topic" to get started.

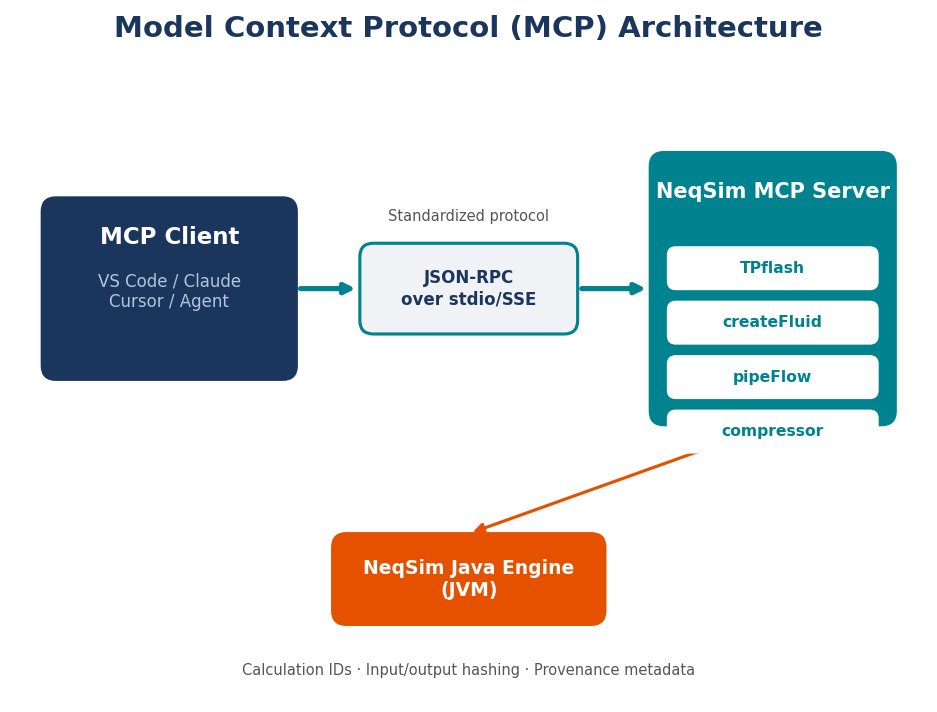

The MCP server (Chapter 8) — For quick calculations, application integration, or governed corporate deployment, NeqSim ships an MCP server exposing 48 engineering tools as a structured JSON API. Any MCP-compatible client (VS Code, Claude Desktop, Cursor, ChatGPT) can connect.

PaperLab (Appendix) — NeqSim includes a publication framework that treats scientific writing and code development as a single activity. This book was written using PaperLab.

Contributing — NeqSim welcomes contributions of all kinds:

| Contribution | Difficulty | What to do |

|---|---|---|

| Write a skill | Easy | neqsim new-skill "name" — domain knowledge, no coding |

| Add a benchmark | Easy | Compare NeqSim results to NIST data in docs/benchmarks/ |

| Create a notebook | Medium | Add a worked example to examples/notebooks/ |

| Add a unit test | Medium | Add tests under src/test/java/neqsim/ |

Resources:

| Resource | Location |

|---|---|

| NeqSim homepage | https://equinor.github.io/neqsimhome |

| Reference manual | REFERENCE_MANUAL_INDEX.md (350+ pages) |

| JavaDoc API | https://equinor.github.io/neqsimhome/javadoc/ |

| Notebook examples | examples/notebooks/ (30+ worked examples) |

| Discussion forum | https://github.com/equinor/neqsim/discussions |

1.7 Summary

- The four-layer stack — Agents (reasoning), Skills (knowledge), Physics Engine (computation), and Data (inputs and outputs) — is the architecture that makes agentic engineering work. No single layer is sufficient alone; together they form an integrated system for engineering task solving.

- Two setup paths are available: GitHub Codespaces for zero-install cloud access, or a local installation for full performance.

- Three commands get you from zero to a working environment:

git clone https://github.com/equinor/neqsim.git && cd neqsim

pip install -e devtools/

neqsim onboard

- The AI agent does the heavy lifting — you describe problems in natural language, and the agent loads the right skills, writes code against the physics engine, and generates validated results.

- The task-solving workflow provides structure for substantial engineering problems — from scoping through analysis to professional reports.

With your environment set up, you are ready to dive into the foundations. Chapter 2 explains why agentic engineering works — the fundamental insight that AI reasoning and physics engines have complementary strengths. Chapter 3 introduces the NeqSim physics engine itself. And Chapter 4 details the AI agent architecture that ties everything together.

Let us begin.

2 Introduction — Why Agentic Engineering?

Learning Objectives

After reading this chapter, the reader will be able to:

- Articulate why traditional engineering workflows are ripe for transformation by AI agents

- Explain the fundamental limitation of large language models for physics-based calculations

- Define "agentic engineering" and distinguish it from conventional automation, GUI-based simulation, and generic AI assistance

- Describe the key architectural principle of coupling AI reasoning with physics engines

2.1 The Engineering Challenge

A process engineer at an operating company on the Norwegian Continental Shelf begins their Monday morning with a familiar task. A wellstream composition has changed — the gas-oil ratio has shifted, the water cut has increased, and operations wants to know whether the existing separator train can handle the new conditions. The engineer opens a commercial process simulator, spends twenty minutes rebuilding the feed composition from a lab report, runs the simulation, exports the results to a spreadsheet, formats a summary table, writes two paragraphs of interpretation, and emails the result to the operations team. The entire exercise takes three hours. The physics involved — a pressure-temperature flash calculation on a hydrocarbon mixture — takes the computer less than one second.

This asymmetry between computation time and human workflow time is not an anomaly. It is the defining characteristic of modern engineering practice. The calculations themselves have been well understood for decades. The Soave-Redlich-Kwong equation of state was published in 1972 [1]. The Peng-Robinson equation followed in 1976 [2]. Michelsen's stability analysis and successive substitution algorithms for flash calculations were established in the early 1980s [3, 4]. The mathematical foundations of process simulation — mass and energy balances, phase equilibrium, transport phenomena — are mature fields. What has not matured is the workflow that surrounds these calculations.

Consider the typical activities that consume an engineer's week. Fluid property lookups: "What is the viscosity of this gas at 85 bar and 40°C?" Process simulation setup: configuring equipment, connecting streams, setting specifications, interpreting convergence warnings. Pipe sizing: looking up standards, calculating pressure drops, checking velocity limits. Report writing: transferring numbers from simulator to Word, making figures, writing boilerplate interpretation. Standards compliance: checking API, ISO, and NORSOK requirements against design parameters.

Each of these tasks follows a well-defined procedure. An experienced engineer could write down the steps on a whiteboard. The challenge is not intellectual novelty — it is volume, repetition, and the friction of translating between tools. The engineer's value lies in judgment, interpretation, and decision-making. Yet a significant fraction of their working hours is consumed by mechanical steps that could, in principle, be delegated.

The engineering software industry has attempted to address this problem for decades. Commercial simulators provide graphical user interfaces for process simulation. These tools are powerful, well-validated, and widely used. However, they share several limitations. They are expensive — annual license costs for a single seat can exceed $50,000. They are closed-source, meaning their internal calculations cannot be inspected or modified. They are GUI-driven, which means automation requires scripting in proprietary languages (OLE automation, macros, internal scripting environments). And they are isolated — getting results out of the simulator and into a report, a spreadsheet, or a decision-support system requires manual data transfer.

The result is an industry where engineers with doctoral-level expertise spend substantial portions of their time on tasks that a well-instructed undergraduate could perform, if only the undergraduate could be given precise, unambiguous instructions and access to the right tools.

This is precisely what AI agents can provide.

2.2 The AI Revolution and Its Limits

The emergence of large language models (LLMs) has transformed the landscape of what artificial intelligence can accomplish. The transformer architecture, introduced by Vaswani et al. [5], enabled a new class of models that learn rich representations of language from vast corpora of text. The scaling of these models — from hundreds of millions to hundreds of billions of parameters — produced capabilities that few researchers anticipated. GPT-3 demonstrated that a single model could perform translation, summarization, question answering, and even basic arithmetic without task-specific training [6]. GPT-4 and Claude showed emergent reasoning abilities — the capacity to decompose problems, follow multi-step instructions, and generate coherent long-form text [7].

These models are remarkably capable at understanding and generating natural language. They can explain thermodynamic concepts, describe the difference between SRK and Peng-Robinson equations of state, outline the steps of a Joule-Thomson expansion process, and discuss the physical meaning of fugacity. In many ways, they function as encyclopedic conversational partners with broad technical knowledge.

However, LLMs have a fundamental limitation that is critical for engineering applications: they cannot perform physics calculations. They generate plausible-sounding text, but they do not solve equations. The distinction is subtle but profound.

Consider a concrete example. Ask an LLM: "What is the density of methane at 100 bar and 25°C?" A well-trained model might respond with a number — perhaps 68 kg/m³ — and it might even be approximately correct, having memorized values from training data. But the model did not solve the Peng-Robinson equation of state to obtain that number. It performed pattern matching on its training corpus. If you change the question slightly — "What is the density of a mixture of 85 mol% methane and 15 mol% ethane at 100 bar and 25°C?" — the model has no reliable mechanism to compute the answer. It will still produce a number. That number may be plausible. It may even be close. But it was not calculated; it was confabulated.

This phenomenon — generating confident but incorrect answers — is known as hallucination, and it is particularly dangerous in engineering contexts. In creative writing, a plausible but invented fact is merely imprecise. In engineering, a plausible but wrong density can propagate through a pipe sizing calculation and result in an undersized relief valve.

The examples of hallucinated physics are numerous and instructive. LLMs asked to calculate compressibility factors will sometimes produce values greater than one for gases at low pressure (physically unreasonable). Asked to determine the dew point of a natural gas mixture, they may report a temperature that violates the Gibbs phase rule. Asked to size a separator, they may apply a Souders-Brown correlation with the wrong exponent. In each case, the output reads like engineering — it uses the right terminology, cites the right equations, follows the right structure — but the numerical content is unreliable.

The root cause is architectural. LLMs are next-token predictors trained on text. They do not have an internal cubic equation solver. They cannot iterate the Rachford-Rice equation [8]. They do not evaluate fugacity coefficients from mixing rules. These operations require numerical algorithms operating on thermodynamic models — precisely the machinery that a physics engine provides.

2.3 The Agentic Solution

The insight that motivates this book is simple: AI agents and physics engines have complementary strengths. The AI agent excels at understanding natural language intent, selecting appropriate methods, generating executable code, and interpreting results in context. The physics engine excels at solving the actual equations — cubic roots, phase stability, energy balances, transport properties — with numerical precision and thermodynamic consistency.

The combination is more capable than either component alone. An engineer can describe a problem in natural language: "Calculate the hydrate formation temperature for this gas composition at wellhead conditions." The AI agent parses the intent, identifies the required calculation (hydrate equilibrium), selects the appropriate thermodynamic model (CPA equation of state with hydrate model), writes the code to set up and execute the calculation in NeqSim, runs the code, and presents the result with physical interpretation. The physics engine ensures the answer is correct. The AI agent ensures the workflow is efficient.

We define agentic engineering as the practice of delegating engineering calculations and workflows to AI agents that autonomously plan and execute multi-step tasks using physics engines as their computational backend. This is distinct from several related concepts:

- AI-assisted search: Looking up information ("What EOS should I use for CO₂?"). The agent provides advice but does not compute.

- Code generation: Asking an AI to write a script ("Write a Python script to calculate density"). The human must review, run, and debug the script.

- Agentic engineering: The agent autonomously writes code, executes it, validates the results, handles errors, generates figures, and produces a report — with the human providing only the initial specification.

The key enabling mechanism is tool use [9]. Modern AI agents are not confined to generating text. They can invoke tools: read files, write files, execute terminal commands, run notebook cells, search codebases, and call APIs. Each tool gives the agent a new capability. A file-reading tool lets the agent inspect source code. A terminal tool lets the agent compile and run programs. A notebook tool lets the agent execute Python cells and observe outputs. Together, these tools transform the agent from a conversational assistant into an autonomous engineer.

The architecture can be represented as a feedback loop:

Engineer (natural language) → AI Agent → Code Generation → Physics Engine (NeqSim)

↑ ↓

←──── Result Interpretation ←────────

The AI agent operates in a cycle: reason about the task, select an action (write code, read a file, run a command), observe the result, reason again, and repeat until the task is complete. This is the ReAct pattern described by Yao et al. [10] — interleaving reasoning traces with actions. The agent does not simply generate a single response; it works iteratively, adapting its approach based on intermediate results.

2.4 Agents vs. Scripts vs. GUIs

To appreciate what agentic engineering offers, it is instructive to compare it with the alternatives that engineers currently use.

Commercial GUI simulators are the industry standard. They provide graphical flowsheet construction, extensive component databases, and validated thermodynamic models. Their strengths are well-established: validated results accepted by regulatory authorities, extensive training materials, and large user communities. Their weaknesses are equally well-known: high license costs ($30,000–$100,000+ per seat annually), closed-source calculations that cannot be inspected or modified, limited automation capabilities, and vendor lock-in that makes it difficult to switch platforms or integrate with other tools.

Custom scripts (Python, MATLAB, Excel/VBA) offer flexibility and low cost. Engineers write their own calculation routines, tailored to specific problems. The scripts are transparent, modifiable, and free to distribute. However, they require significant programming expertise to write and maintain. They are brittle — a small change in requirements can require substantial code restructuring. They rarely include comprehensive thermodynamic models; most engineers implement simplified correlations rather than rigorous equations of state. And they do not scale: a script written for one pipe sizing problem must be rewritten for a different scenario.

Agentic systems represent a third approach. The agent uses a rigorous physics engine (in our case, NeqSim) as its computational backend, gaining access to validated thermodynamic models, process equipment simulations, and standards calculations. But instead of requiring the user to interact through a GUI or write code manually, the agent accepts natural language instructions and generates the code itself.

| Feature | Commercial GUI | Custom Scripts | Agentic System |

|---|---|---|---|

| User interface | Graphical flowsheet | Code editor | Natural language |

| Setup time | Minutes to hours | Hours to days | Seconds to minutes |

| Thermodynamic rigor | High | Low to medium | High (physics engine) |

| Adaptability | Limited | High (manual) | High (automatic) |

| Cost per seat | $30K–$100K/year | Free | Free (open-source engine) |

| Automation | Difficult | Native | Native |

| Report generation | Manual export | Manual coding | Automatic |

| Error handling | User-driven | Try-except blocks | Self-healing |

| Knowledge transfer | Training courses | Code documentation | Embedded in instructions |

The adaptability row deserves emphasis. When a commercial simulator encounters a non-standard problem — an unusual fluid, a novel equipment configuration, a specific regulatory requirement — the user must work within the constraints of the GUI or resort to custom unit operations (if available). A custom script can handle anything the programmer can code, but at the cost of development time. An agentic system can adapt on the fly: if the standard approach fails, the agent can try alternative methods, consult different skills, or modify its strategy — all within a single task execution.

2.5 What Makes This Book Different

The literature on artificial intelligence in engineering is growing rapidly. Numerous papers describe the application of machine learning to fluid property prediction, surrogate modeling, process optimization, and fault detection. These are valuable contributions. However, they typically focus on one narrow application — training a neural network to predict viscosity, for example — and they treat the AI model as a black-box function approximator.

This book takes a fundamentally different approach. We are not training AI models to replace physics. We are coupling AI reasoning with physics engines to automate engineering workflows. The AI agent does not predict density — it writes code that calls a rigorous equation of state to calculate density. The AI agent does not approximate flash calculations — it invokes Michelsen's algorithm through a validated thermodynamic library.

Moreover, this is not a theoretical treatise. The system described in this book is operational. It has been used to solve over forty real engineering tasks for assets on the Norwegian Continental Shelf. These tasks range from simple property lookups (completed in seconds) to comprehensive field development studies with uncertainty analysis, risk evaluation, and professional engineering reports (completed in hours rather than weeks). Every example in this book comes from actual task execution. Every code sample has been run. Every result has been validated.

The system is built on NeqSim [11], an open-source thermodynamics and process simulation library developed at the Norwegian University of Science and Technology (NTNU) in collaboration with Equinor and other industry partners. NeqSim provides the physics. AI agents — specifically, large language models configured with specialized instructions and tools — provide the reasoning and automation. The combination, which we call "agentic engineering," is the subject of this book.

2.6 A Preview of What Is Possible

To make the concept concrete, consider three examples at increasing levels of complexity.

Example 1: Property lookup (seconds)

An engineer asks: "What is the Joule-Thomson coefficient of natural gas at 150 bar and 35°C? The gas is 85% methane, 8% ethane, 4% propane, 2% CO₂, 1% nitrogen."

The agent writes five lines of Python code: create a fluid with the given composition using the SRK equation of state, run a TP flash at the specified conditions, call initProperties(), and read the Joule-Thomson coefficient. Total execution time: under two seconds. The result includes the numerical value, the units, and a brief physical interpretation ("The positive JT coefficient of 0.42 K/bar indicates this gas will cool upon throttling, which is relevant for Joule-Thomson valve design and hydrate risk assessment").

In the traditional workflow, the engineer would open a simulator, configure the fluid, run the flash, and read the value from a results table. Time: 5–15 minutes. In the agentic workflow: 10 seconds including the time to type the question.

Example 2: Process simulation notebook (minutes)

An engineer provides a design basis: "Model a two-stage compression system for export gas. Inlet conditions: 30 bar, 25°C, 500,000 Sm³/day of dry gas. Target: 200 bar export pressure. Include intercooling to 40°C and calculate power consumption."

The agent creates a Jupyter notebook with the following steps: (1) define the fluid and set the feed conditions, (2) build a ProcessSystem with a first-stage compressor, aftercooler, second-stage compressor, and final cooler, (3) run the simulation, (4) extract power consumption, discharge temperatures, and polytropic efficiencies, (5) generate pressure-temperature and power plots, and (6) write a summary with key results. The notebook is executed cell by cell, with the agent verifying each cell's output before proceeding. Total time: 3–5 minutes.

Example 3: Full field development study (hours)

A team requests: "Evaluate the economic viability of a subsea tieback for a gas condensate discovery. Reservoir conditions: 350 bar, 95°C. Distance to host platform: 25 km. Water depth: 350 m. Estimated GIP: 8 GSm³. Provide NPV analysis with uncertainty."

The agent executes a complete task-solving workflow: creates a task folder, writes a technical specification, builds a fluid model from the given composition, runs production profile simulations using decline curve analysis, models the pipeline and subsea system, estimates CAPEX and OPEX using parametric cost models, calculates NPV under the Norwegian petroleum tax regime, runs Monte Carlo simulations for uncertainty analysis (varying GIP, gas price, CAPEX multiplier), produces a tornado diagram for sensitivity ranking, creates a risk register with ISO 31000 risk matrix, and generates a professional engineering report in both Word and HTML formats with numbered figures, tables, and references. Total time: 1–3 hours, depending on the number of Monte Carlo iterations. The equivalent manual effort: 2–4 weeks for a team of engineers.

2.7 Book Structure Overview

This book is organized in four parts.

Part I: Foundations (Chapters 1–4) establishes the conceptual and technical groundwork. Chapter 1 provides a hands-on getting started guide — the four-layer architecture, installation, a first agentic session, and the task-solving workflow. Chapter 2 (this chapter) introduces the motivation and concept of agentic engineering. Chapter 3 describes the NeqSim physics engine — its thermodynamic models, flash algorithms, process equipment, and Python interface. Chapter 4 explains the AI agent architecture: tools, skills, instructions, and the four-layer design (agents, skills, physics engine, and data).

Part II: The Agentic Platform (Chapters 5–8) presents the infrastructure that makes agentic engineering practical. Chapter 5 describes the multi-agent system — over twenty specialist agents and the router that composes them into workflows. Chapter 6 covers the skills library: curated knowledge packages that encode domain expertise, code patterns, and error recovery strategies. Chapter 7 presents the task-solving workflow that structures how agents approach engineering problems, from scope and research through analysis to professional reports. Chapter 8 introduces the MCP server — a governed interface that wraps NeqSim calculations in standardised, validated, and auditable tool definitions.

Part III: Worked Examples (Chapters 9–11) demonstrates agentic engineering solving progressively complex problems. Chapter 9 covers thermodynamic property calculations and PVT analysis — from simple lookups to multi-component phase envelopes and gas quality compliance. Chapter 10 addresses process simulation and equipment design — compressors, heat exchangers, separators, and complete process trains. Chapter 11 treats flow assurance and pipeline design: hydrate prediction, wax management, corrosion assessment, and pipeline hydraulics.

Part IV: Industrial Applications and Future (Chapters 12–13) connects the technical framework to real-world practice. Chapter 12 presents case studies from the Norwegian Continental Shelf, documenting how the agentic system has been applied to actual engineering problems across thermodynamics, process simulation, flow assurance, and field development. Chapter 13 looks forward — examining multi-model orchestration, web-based interfaces, organizational adoption, and the broader implications for engineering education and practice.

Each chapter includes worked examples with complete code, exercises for the reader, and references to the NeqSim source code and documentation. The accompanying repository contains all notebooks, data files, and agent configurations used throughout the book.

The goal is not to replace engineering judgment. It is to amplify it — to free engineers from mechanical calculation workflows so they can focus on what humans do best: understanding context, making decisions under uncertainty, and exercising professional judgment. The physics engine ensures the answers are right. The AI agent ensures the work gets done. Together, they represent a new paradigm for engineering practice.

Summary

Key points from this chapter:

- Engineers spend a disproportionate amount of time on mechanical workflow steps (data entry, tool manipulation, report formatting) rather than on engineering judgment

- Large language models are powerful at understanding intent and generating code, but they cannot reliably perform physics calculations — they hallucinate numerical results

- Agentic engineering couples AI reasoning with physics engines: the agent writes code, the engine computes results, and the agent interprets and presents them

- This approach is fundamentally different from both commercial GUI simulators and custom scripts, offering the rigor of validated thermodynamic models with the adaptability of natural language interaction

- The system described in this book is operational and has been used for over forty real engineering tasks on the Norwegian Continental Shelf

Exercises

- Exercise 2.1: Identify three engineering tasks from your own practice that follow a well-defined procedure and could potentially be delegated to an AI agent. For each, describe the inputs, the calculation steps, and the expected outputs.

- Exercise 2.2: Ask a large language model (ChatGPT, Claude, or similar) to calculate the density of a methane-ethane mixture (70/30 mol%) at 80 bar and 15°C. Compare the answer with a value from NIST WebBook or a commercial simulator. Discuss the reliability of the LLM's answer.

- Exercise 2.3: Using the comparison table in Section 2.4, evaluate which approach (commercial GUI, custom script, or agentic system) would be most appropriate for (a) a one-time relief valve sizing calculation, (b) a recurring weekly production optimization, and (c) a full FEED study for a new platform.

3 The NeqSim Physics Engine

Learning Objectives

After reading this chapter, the reader will be able to:

- Describe the architecture and capabilities of NeqSim as an open-source thermodynamic and process simulation library

- Select the appropriate equation of state for a given application (natural gas, associating fluids, custody transfer)

- Perform flash calculations and correctly initialize properties for downstream use

- Build process flowsheets using NeqSim's equipment models and ProcessSystem framework

- Access NeqSim's Java classes from Python using the jneqsim gateway

3.1 Overview of NeqSim

NeqSim — the Non-Equilibrium Simulator — is an open-source Java library for thermodynamic calculations and process simulation [11]. Originally developed at the Norwegian University of Science and Technology (NTNU) by Even Solbraa and colleagues [12], it has been continuously refined over two decades in collaboration with Equinor and other partners in the Norwegian petroleum industry. The name reflects its origins in non-equilibrium thermodynamics, though the library has grown far beyond that original scope to encompass a comprehensive suite of equilibrium thermodynamics, transport properties, process equipment models, and engineering standards calculations.

At its core, NeqSim provides three fundamental capabilities. First, it implements multiple equations of state for calculating thermodynamic properties of fluid mixtures — density, enthalpy, entropy, fugacity, heat capacity, speed of sound, and Joule-Thomson coefficients. Second, it provides flash algorithms that determine phase equilibrium at specified conditions — how a mixture splits into vapor, liquid, and aqueous phases. Third, it offers process equipment models — separators, compressors, heat exchangers, valves, pipes, distillation columns, and more — that can be assembled into process flowsheets.

The library contains thermodynamic data for over 100 chemical components, ranging from light gases (hydrogen, nitrogen, CO₂, H₂S) through hydrocarbons (methane to C20+ pseudo-components) to polar molecules (water, methanol, MEG) and ions for electrolyte systems. It supports petroleum fluid characterization, including plus-fraction splitting and grouping using standard methods.

NeqSim is written in Java, which provides several advantages for an engineering library: strong typing catches many errors at compile time, the JVM ensures consistent numerical behavior across platforms, and the extensive Java ecosystem provides robust mathematics libraries (EJML, Apache Commons Math, JAMA). The library is distributed as a single JAR file that can be embedded in any Java application, called from Python through jpype, or accessed through a Model Context Protocol (MCP) server for integration with AI agents.

Why NeqSim for Agentic Engineering

Several characteristics make NeqSim particularly well-suited as the physics backend for AI agents, beyond its technical capabilities:

Open source. The entire source code is available on GitHub. An AI agent can read the source code to understand method signatures, parameter types, and return values. It can search for examples in the test suite. It can examine how a particular equation is implemented. This transparency is impossible with closed-source commercial simulators.

Programmatic API. NeqSim is a library, not a GUI application. Every operation — creating a fluid, running a flash, building a process — is accomplished through method calls. This is natural for an AI agent that generates code. There is no need to script GUI interactions or navigate dialog boxes.

Comprehensive test suite. The repository contains thousands of JUnit tests that serve as executable examples. When an agent needs to understand how to use a particular class, it can read the corresponding test for correct usage patterns.

Self-documenting. JavaDoc comments, skill files, and extensive markdown documentation provide the agent with structured knowledge about correct API usage, physical constraints, and common pitfalls.

3.2 Equations of State

The equation of state (EOS) is the mathematical foundation of all thermodynamic calculations. It relates pressure, volume (or density), and temperature for a fluid mixture, and from it, all other thermodynamic properties can be derived through fundamental thermodynamic relations. NeqSim implements several equations of state, each suited to different applications.

3.2.1 The Soave-Redlich-Kwong (SRK) Equation

The SRK equation of state [1] is one of the most widely used cubic equations in the petroleum industry. It takes the form:

$$P = \frac{RT}{v - b} - \frac{a(T)}{v(v + b)}$$

where $P$ is pressure, $T$ is temperature, $v$ is molar volume, $R$ is the gas constant, and $a(T)$ and $b$ are mixture parameters calculated from pure-component critical properties and binary interaction parameters. The temperature dependence of the attractive parameter $a(T)$ is given by the Soave alpha function, which uses the acentric factor $\omega$ to correlate the vapor pressure curve.

In NeqSim, an SRK system is created as:

from neqsim import jneqsim

fluid = jneqsim.thermo.system.SystemSrkEos(273.15 + 25.0, 60.0)

fluid.addComponent("methane", 0.85)

fluid.addComponent("ethane", 0.10)

fluid.addComponent("propane", 0.05)

fluid.setMixingRule("classic")

The SRK equation is a reliable general-purpose choice for hydrocarbon systems. It performs well for vapor-liquid equilibrium of non-polar mixtures, gas phase properties, and conditions away from the critical point. Its primary limitation is the prediction of liquid densities, which can deviate by 5–15% from experimental values. Volume translation methods (Peneloux correction) can improve liquid density prediction, and NeqSim supports these corrections.

3.2.2 The Peng-Robinson (PR) Equation

The Peng-Robinson equation [2] was developed specifically to improve liquid density predictions:

$$P = \frac{RT}{v - b} - \frac{a(T)}{v(v + b) + b(v - b)}$$

The different denominator in the attractive term gives the PR equation a slightly different molar volume dependence that generally yields better liquid density predictions than SRK, particularly for heavier hydrocarbons. Both equations predict vapor-liquid equilibrium with comparable accuracy.

In NeqSim: jneqsim.thermo.system.SystemPrEos(T, P).

3.2.3 The CPA Equation (Cubic-Plus-Association)

The CPA equation of state [13] extends the SRK equation with an association term that accounts for hydrogen bonding between polar molecules. This is essential for systems containing water, methanol, monoethylene glycol (MEG), or other associating species. The standard cubic equations of state perform poorly for these systems because hydrogen bonding fundamentally alters the thermodynamic behavior — for example, the anomalous behavior of water (density maximum at 4°C, high heat capacity, strong deviation from ideal mixing with hydrocarbons) cannot be captured by a simple cubic equation.

In NeqSim, the CPA implementation uses the variant developed at Statoil (now Equinor): jneqsim.thermo.system.SystemSrkCPAstatoil(T, P). This is the recommended equation of state for any system containing water and hydrocarbons — which encompasses most production systems in the oil and gas industry.

CPA is essential for:

- Hydrate equilibrium calculations (requires accurate water fugacity)

- MEG/methanol injection calculations (inhibitor dosing)

- Water content of gas (water dew point)

- Three-phase (gas-oil-water) separation

- CO₂ systems with water (CCS applications)

3.2.4 The GERG-2008 Equation

The GERG-2008 equation [14] is a multi-parameter equation of state developed specifically for natural gas applications. Unlike cubic equations, which are based on a simple two-parameter model, GERG-2008 uses an empirical Helmholtz free energy formulation with hundreds of parameters fitted to experimental data for 21 natural gas components and their binary mixtures. It represents the state of the art for custody transfer calculations and is the basis for ISO 20765.

In NeqSim: jneqsim.thermo.system.SystemGERG2008(T, P).

GERG-2008 provides the highest accuracy for natural gas properties — density uncertainties of 0.1% or better in the gas phase, compared to 1–3% for cubic equations. However, it is limited to the 21 components in the GERG model and does not support heavy hydrocarbons, polar molecules (except water at limited conditions), or association. It is the correct choice when accuracy is paramount and the fluid falls within its composition range.

3.2.5 Selecting an Equation of State

The choice of equation of state is one of the most consequential decisions in a thermodynamic calculation. An incorrect choice can introduce systematic errors that propagate through all downstream results. The following table provides guidance:

| Application | Recommended EOS | Rationale |

|---|---|---|

| Dry natural gas (processing, transport) | SRK or PR | Well-characterized, fast, adequate accuracy |

| Gas with water (hydrates, dew point) | CPA | Association term required for water |

| MEG/methanol injection | CPA | Polar + associating system |

| Custody transfer metering | GERG-2008 | Highest accuracy for gas density |

| CO₂ capture and transport | CPA | CO₂-water-amine interactions |

| Black oil / condensate | SRK or PR with characterization | Plus-fraction handling needed |

| Hydrogen systems | SRK or PR | Limited experimental data; CPA for H₂-water |

| Electrolyte / brine | Electrolyte-CPA | Ion interactions needed |

An important principle: the equation of state must be selected before any calculations are performed, and the choice should be justified based on the fluid system and the required accuracy. AI agents are instructed to consider this selection as a mandatory first step.

3.3 Flash Calculations

Flash calculations are the computational heart of process simulation. A flash calculation determines how a mixture distributes itself among phases (vapor, liquid, second liquid, aqueous) at specified thermodynamic conditions. The result includes the amounts and compositions of each phase, along with all associated thermodynamic properties.

3.3.1 Types of Flash Calculations

NeqSim supports multiple flash specifications, corresponding to different pairs of fixed thermodynamic variables:

- TP flash (temperature-pressure): The most common specification. Given T and P, determine the phase fractions and compositions. Used whenever both T and P are known — inlet conditions, equipment at specified conditions.

- PH flash (pressure-enthalpy): Given P and total enthalpy, determine T and phase state. Used for adiabatic processes — valves, compressors, flash drums where heat exchange is known.

- PS flash (pressure-entropy): Given P and total entropy, determine T and phase state. Used for isentropic processes — ideal compressor work, turbine calculations.

- Dew point calculations: Find the temperature (at given P) or pressure (at given T) where the first liquid drop forms.

- Bubble point calculations: Find the temperature (at given P) or pressure (at given T) where the first vapor bubble forms.

3.3.2 The Algorithm

NeqSim implements the Michelsen stability analysis and successive substitution algorithm [3, 4], which is the industry standard for flash calculations. The algorithm proceeds in two stages:

Stage 1 — Stability analysis. Given a single-phase feed at the specified conditions, determine whether the phase is thermodynamically stable. This is done by searching for a trial phase composition that would lower the total Gibbs energy of the system. If such a composition is found, the single phase is unstable and will split into two (or more) phases.

Stage 2 — Phase split calculation. If the system is unstable, solve the Rachford-Rice equation [8] to determine the phase fractions and compositions at equilibrium. This involves iterating the equilibrium ratios (K-values) until the fugacity of each component is equal in all phases:

$$f_i^V = f_i^L \quad \text{for all components } i$$

where $f_i^V$ and $f_i^L$ are the fugacities of component $i$ in the vapor and liquid phases, respectively. The fugacities are calculated from the equation of state.

3.3.3 The Critical Importance of initProperties()

A critical aspect of using NeqSim — and one that has been the source of countless errors in both manual and agent-generated code — is the initialization of physical properties after a flash calculation. The flash algorithm determines phase equilibrium (compositions, phase fractions, fugacities) but does not automatically compute transport properties (viscosity, thermal conductivity) or certain derived thermodynamic properties (density in convenient units, heat capacities at the phase level).

After any flash calculation, the following call is mandatory:

ops = jneqsim.thermodynamicoperations.ThermodynamicOperations(fluid)

ops.TPflash()

fluid.initProperties() # MANDATORY — initializes both thermo AND transport properties

Without initProperties(), calls to getViscosity(), getThermalConductivity(), and getDensity() may return zero. This is not an error in NeqSim — it is a deliberate design choice that separates the fast flash calculation (needed millions of times in process simulation inner loops) from the more expensive property initialization (needed only when properties are to be reported). But for the end user, including AI agents, forgetting this call is the single most common source of incorrect results.

The NeqSim agent instructions and skills contain explicit reminders about this requirement. The neqsim-api-patterns skill includes initProperties() in every flash example. The neqsim-troubleshooting skill lists "zero viscosity" as the first diagnostic to check. This is a concrete example of how domain-specific knowledge encoded in agent instructions prevents systematic errors.

3.3.4 Reading Results After Flash

Once initProperties() has been called, the full suite of fluid properties is available:

# Phase properties

density_gas = fluid.getPhase("gas").getDensity("kg/m3")

density_oil = fluid.getPhase("oil").getDensity("kg/m3")

viscosity = fluid.getPhase("gas").getViscosity("kg/msec")

thermal_cond = fluid.getPhase("gas").getThermalConductivity("W/mK")

cp = fluid.getPhase("gas").getCp("J/molK")

# System-level properties

z_factor = fluid.getPhase("gas").getZ()

molecular_weight = fluid.getMolarMass("kg/mol")

enthalpy = fluid.getEnthalpy("J")

# Component properties within a phase

y_methane = fluid.getPhase("gas").getComponent("methane").getx() # mole fraction

NeqSim uses a consistent unit system throughout its API. Temperatures are in Kelvin internally (the user provides offsets like 273.15 + 25.0), pressures are in bara, and flows can be specified in various units using string parameters ("kg/hr", "m3/hr", "MSm3/day").

3.4 Process Equipment

NeqSim models over 40 types of process equipment, organized under the neqsim.process.equipment package. Each equipment type implements the ProcessEquipmentInterface, which provides a consistent API for connecting streams, running calculations, and reading results. Equipment is assembled into flowsheets using the ProcessSystem class.

3.4.1 Streams

The Stream class represents a material flow — a fluid at specified conditions and flow rate. Streams are the connections between equipment:

Stream = jneqsim.process.equipment.stream.Stream

feed = Stream("feed gas", fluid)

feed.setFlowRate(100000.0, "kg/hr")

feed.setTemperature(25.0, "C")

feed.setPressure(60.0, "bara")

3.4.2 Separators

Separators model the phase separation of multiphase mixtures. NeqSim supports two-phase (gas-liquid) and three-phase (gas-oil-water) separators:

Separator = jneqsim.process.equipment.separator.Separator

ThreePhaseSeparator = jneqsim.process.equipment.separator.ThreePhaseSeparator

hp_sep = Separator("HP separator", feed)

# After running, access outlet streams:

# hp_sep.getGasOutStream()

# hp_sep.getLiquidOutStream()

3.4.3 Compressors

Compressors model the compression of gas streams. NeqSim calculates polytrophic head, power consumption, discharge temperature, and efficiency:

Compressor = jneqsim.process.equipment.compressor.Compressor

comp = Compressor("first stage", hp_sep.getGasOutStream())

comp.setOutletPressure(120.0, "bara")

comp.setPolytropicEfficiency(0.78)

3.4.4 Heat Exchangers

Heat exchangers model heat transfer between two streams. NeqSim supports specification by outlet temperature, duty, or UA value:

Heater = jneqsim.process.equipment.heatexchanger.Heater

cooler = Heater("intercooler", comp.getOutletStream())

cooler.setOutTemperature(273.15 + 40.0)

3.4.5 Valves and Pipes

Valves model pressure reduction (Joule-Thomson expansion), while pipes model pressure drop due to friction:

ThrottlingValve = jneqsim.process.equipment.valve.ThrottlingValve

PipeBeggsAndBrills = jneqsim.process.equipment.pipeline.PipeBeggsAndBrills

valve = ThrottlingValve("JT valve", feed)

valve.setOutletPressure(30.0, "bara")

pipe = PipeBeggsAndBrills("export pipeline", feed)

pipe.setPipeWallRoughness(5e-5)

pipe.setLength(25000.0, "m")

pipe.setDiameter(0.3, "m")

3.4.6 Building a Process Flowsheet

The ProcessSystem class is the container that assembles individual equipment into a flowsheet. Equipment is added in the order of flow, and the system handles stream connections:

ProcessSystem = jneqsim.process.processmodel.ProcessSystem

process = ProcessSystem()

process.add(feed)

process.add(hp_sep)

process.add(comp)

process.add(cooler)

process.run()

# Read results

power = comp.getPower("kW")

discharge_temp = comp.getOutletStream().getTemperature("C")

The ProcessSystem enforces unique equipment names, handles recycle convergence through iterative calculation, and provides automation interfaces for variable access. For large plants with multiple process areas, multiple ProcessSystem objects can be assembled into a ProcessModel.

3.5 Standards and Gas Quality

One of NeqSim's distinguishing features is its built-in support for engineering standards. The library implements calculations defined by international standards organizations, enabling direct computation of standardized properties without external tools or manual lookup.

Supported standards include:

| Standard | Description | NeqSim Implementation |

|---|---|---|

| ISO 6976 [15] | Natural gas — Calorific value, density, and Wobbe index | Standard_ISO6976 |

| ISO 20765 | Natural gas — Thermodynamic properties (GERG-2008) | Via GERG-2008 EOS |

| AGA 8 | Compressibility factor for natural gas | Standard_AGA8 |

| GPA 2145 | Physical constants for hydrocarbons | Component database |

| EN ISO 13443 | Standard reference conditions | Built into unit conversion |

| ISO 18453 | Natural gas — Correlation for water content | Moisture calculation |

| ASTM D1945 | Standard practice for analysis of natural gas by GC | Composition handling |

These standards are not merely informational — they are executable. An agent can calculate an ISO 6976 superior calorific value with a single method call:

Standard_ISO6976 = jneqsim.standards.gasquality.Standard_ISO6976

iso = Standard_ISO6976(fluid)

iso.calculate()

gcv = iso.getValue("SuperiorCalorificValue", "MJ/Sm3")

wobbe = iso.getValue("SuperiorWobbeIndex", "MJ/Sm3")

This capability is particularly valuable for agentic workflows because gas quality calculations are frequently requested — they are a core part of custody transfer, tariff calculations, and pipeline gas specifications. Having them available as direct API calls means the agent can compute certified values in seconds.

3.6 The Python Gateway

While NeqSim is written in Java, the primary interface for agentic workflows is Python, accessed through the jneqsim module. This module uses jpype to bridge the Java Virtual Machine (JVM) from Python, providing direct access to all Java classes as if they were Python objects.

The standard import pattern is:

from neqsim import jneqsim

# All Java classes are accessible via their full package path

SystemSrkEos = jneqsim.thermo.system.SystemSrkEos

ThermodynamicOperations = jneqsim.thermodynamicoperations.ThermodynamicOperations

Stream = jneqsim.process.equipment.stream.Stream

ProcessSystem = jneqsim.process.processmodel.ProcessSystem

The jpype bridge handles type conversion between Python and Java automatically. Python floats become Java doubles, Python strings become Java Strings, and Java arrays can be accessed as Python sequences. Method overloading is resolved based on argument types.

This architecture means that Jupyter notebooks — the primary output format for agentic engineering tasks — can access the full power of NeqSim without any functionality restrictions. Every Java class, every method, every calculation is available. The notebooks serve as both computational scripts and documentation, combining code, results, figures, and interpretation in a single reproducible artifact.

3.7 Why Open Source Matters for Agentic Systems

The open-source nature of NeqSim is not merely a licensing convenience — it is an architectural necessity for effective agentic engineering. This claim requires justification, as commercial simulators are also powerful and well-validated.

The fundamental issue is observability. An AI agent learns to use a tool by observing its behavior — reading documentation, examining examples, inspecting source code, and running tests. With an open-source library, all of these learning modalities are available. The agent can:

- Read the source code to understand exactly what a method does, what parameters it expects, and what it returns.

- Search the test suite for working examples of how to use a class correctly. The tests serve as the ground truth for API usage.

- Examine the JavaDoc for documented behavior, parameter constraints, and return value descriptions.

- Read the skill files — curated knowledge packages that encode correct patterns, common pitfalls, and recovery strategies.

- Inspect the git history to understand how the API has evolved and what changes have been made recently.

With a closed-source simulator, the agent has access to documentation (if published) and possibly examples. But it cannot read the source code to disambiguate unclear documentation, it cannot search the test suite for verified usage patterns, and it cannot understand the internal implementation to diagnose unexpected behavior.

This observability advantage compounds over time. As the agent encounters new problems and develops new solutions, those solutions can be encoded in skill files, added to the test suite, and documented — all within the same repository. The knowledge base grows organically. The more the system is used, the more capable it becomes. This virtuous cycle is only possible when the physics engine is open and transparent.

The practical implication is significant. As documented throughout this book and in the NeqSim reference materials [16, 17], the combination of a rigorous open-source thermodynamic library with AI agents that can read, understand, and use that library creates a system that is both more capable and more trustworthy than either component alone.

Summary

Key points from this chapter:

- NeqSim is an open-source Java library providing rigorous thermodynamic calculations, flash algorithms, process equipment models, and engineering standards

- The choice of equation of state (SRK, PR, CPA, GERG-2008) must be matched to the fluid system — CPA for water-containing systems, GERG-2008 for custody transfer accuracy

- Flash calculations determine phase equilibrium;

initProperties()must always be called after a flash before reading transport properties - Process equipment is assembled into flowsheets using

ProcessSystem, which handles stream connections and recycle convergence - Built-in standards (ISO 6976, AGA 8, etc.) enable direct calculation of standardized gas quality parameters

- The Python gateway (

jneqsim) provides full access to all Java classes from Jupyter notebooks - Open-source transparency is an architectural necessity for agentic systems — agents learn by reading source code, tests, and documentation

Exercises

- Exercise 3.1: Create a NeqSim fluid representing a North Sea gas condensate (methane 0.72, ethane 0.08, propane 0.05, i-butane 0.02, n-butane 0.03, i-pentane 0.01, n-pentane 0.01, n-hexane 0.01, CO₂ 0.03, nitrogen 0.02, water 0.02). Perform a TP flash at 80 bar and 30°C. Report the gas and liquid phase fractions and the density of each phase.

- Exercise 3.2: For the fluid in Exercise 3.1, calculate the cricondenbar and cricondentherm by running dew point calculations over a range of pressures and temperatures. Plot the phase envelope.

- Exercise 3.3: Build a two-stage separation system (HP separator at 60 bar, LP separator at 5 bar) for the fluid in Exercise 3.1 at a flow rate of 50,000 kg/hr. Report the gas and oil production rates from each separator.

- Exercise 3.4: Compare the gas density predicted by SRK, PR, and GERG-2008 for pure methane at 100 bar and temperatures from -20°C to 100°C. Plot the results and discuss which equation gives the most accurate predictions and why.

4 AI Agents, Skills, and Tool-Use Architecture

Learning Objectives

After reading this chapter, the reader will be able to:

- Trace the evolution from simple chatbots to autonomous tool-using agents

- Explain the ReAct (Reasoning + Acting) paradigm and its application to engineering tasks

- Describe the four-layer architecture of agents, skills, physics engine, and data

- Understand how agent instructions and skill files encode domain-specific engineering knowledge

- Identify the guardrails and safety mechanisms that prevent common errors in agent-generated code

4.1 From Chatbots to Agents

The history of conversational AI can be understood as a progression through three distinct paradigms, each representing a qualitative leap in capability.

Paradigm 1: Pattern-matching chatbots. The earliest conversational systems, from ELIZA (1966) to the rule-based customer service bots of the 2010s, operated by matching user inputs against predefined patterns and returning scripted responses. They had no understanding of language, no memory of context beyond simple slot-filling, and no ability to perform any action beyond generating text from templates. Their utility was limited to narrow, well-defined domains where the space of possible user inputs could be enumerated in advance.

Paradigm 2: Neural language models. The transformer architecture [5] and the subsequent scaling of language models [6] produced systems with a qualitatively different relationship to language. Models like GPT-3, GPT-4, and Claude do not match patterns against templates — they generate text by predicting the most likely continuation of a sequence, conditioned on a vast corpus of training data. This gives them remarkable abilities: they can summarize documents, translate between languages, answer questions across many domains, write code in dozens of programming languages, and engage in nuanced reasoning about complex topics [7].

However, these models remain fundamentally text generators. They can describe how to solve a differential equation but cannot actually solve one. They can explain the steps of a flash calculation but cannot execute the iteration. They can write a Python script but cannot run it. Their world model is linguistic, not physical. They know what correct answers look like but cannot reliably produce correct answers for quantitative problems.